Stop Throwing Out the Transcripts

Your Org Has Operational Alzheimer's - and AI agents making it worse

Most engineering orgs are past the honeymoon with AI. Nobody’s seriously asking anymore whether to work with agents. We’re already at the much more mature, much less sexy stage of “how do we manage this thing without burning the company’s budget?”

Suddenly the questions from above sound different.

What are we running? Why? How much context? Where is a smaller model enough? When do we compress? And how do we explain to leadership what came out of all this besides an inflated bill?

Classic. The minute money enters the picture, you have an ops problem.

And when managers see an ops problem, they measure what they can measure: cost, calls, seats, usage. All of which matters. Sure. But it’s been chewed to death in dozens of other posts. In this one I want to step back and look at the problem from a different angle.

How does an organization keep building on what it already understood?

I’m building a cumulative argument here, so hang on. It starts with money, runs through transcripts, memory, context, and monorepos, so it might look like I’m jumping between topics. I’m not. It’s the same argument climbing one floor at a time, and at the end it converges into practical recommendations. Good luck reading the whole thing, byyyyye.

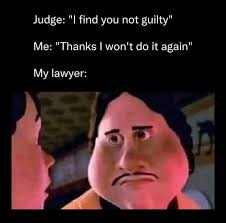

The Code Is the Verdict. The Transcript Is the Record.

The verdict says what was decided. Guilty, not guilty. Granted, denied. But a lawyer walking into a new case can’t work from the verdict alone. She needs to know what questions were asked, which evidence was thrown out, which argument convinced the judge, and where the call was made that decided the case.

Same with code.

The diff says what changed.

The transcript tells you how we got there.

The problem is we still treat it like temporary garbage.

What we call “logs,” “transcripts,” or “session history” today is actually the most valuable raw material the system produces. It just looks like waste because we don’t have a factory that recycles it yet. Like an old industry venting heat into the air, until one day someone figures out how to capture that heat and turn it into energy. Agents produce a cognitive byproduct. The question is when we stop treating it like smoke and start building a power plant around it.

Stop Burning Cash, Brother

In my view, one of the mistakes orgs make with agents is measuring adoption by usage. How many model calls, how many tokens, how many sessions, how many PRs got opened. It’s easy to measure, but it doesn’t say much. High usage can be the sign of a team firing on all cylinders. It can also be exactly the opposite: poorly framed problems, context rot, and developers generating a lot of motion around very little understanding.

When it gets easier to build, it gets easy to build too much.

You see it in elaborate architectures for tiny problems, layers nobody asked for, and code with a low ROI relative to its maintenance burden. Why does this happen? Because an agent, by default, is a continuation machine. It doesn’t feel the future weight of what it’s adding. It doesn’t get up out of the chair and say, “wait, maybe if we drop this requirement, the whole problem disappears.” It doesn’t walk over to product to ask whether that edge case is really worth two weeks of future complexity.

And here’s where the transcript gets interesting again. Because it’s the place where you can see not only whether we understood the problem, but whether the solution was proportional to it.

And allllll of this costs money.

Sorry, cost money.

You already paid for it.

You didn’t just pay for the code the model spat out at the end. That’s usually the cheap part. You paid for the entire road there: the context, the questions, the corrections, the mistakes, the tools, the calls, and all the other tokens that got burned along the way trying to figure out what we were even building.

It’s a bit like paying a lawyer for all the prep work, and then keeping only the judge’s last sentence.

Nice. You won. Now try to figure out why.

It’s absurd, because we already know there’s value in this information. Otherwise we wouldn’t have spent years on the corporate documentation saga: retros, design docs, meeting notes, documents that people write because they have to and others don’t read because they’re also busy writing documents nobody will read.

It’s expensive.

It’s unnatural.

And it usually misses the moment when understanding actually formed.

And right now, when a huge chunk of this documentation gets generated as a byproduct of the work itself, automatically, by a robot, of all moments, now we throw it out? Is this really how I’m getting eliminated? Over a slice of onion?

There’s No Such Thing as a Bad Agent, Just an Agent Having a Bad Time

You know that moment when you have to open a new tab and switch agents?

There’s a tiny second of dread there.

Like you’re switching therapists and now you’re starting over with three sessions of “let’s get to know each other,” and you walk out three hours and 1500 shekels later. That’s exactly the symptom of context that isn’t anchored.

It happens because in every tool today the context is trapped inside a single conversation. There’s no real persistent workspace. There’s a loop. There are tools. There’s context. Maybe short-term memory. But a shared reality that the agent, the developer, and the team can lean on over time? Doesn’t exist.

Every new window starts almost from scratch.

“Hi Grandma, it’s me, Adir. Yes, Ad-... your grandson. Yes I’m sure you have a grandson... we talked yesterday for three hours about the permissions system. No, I’m not hungry. No no no Grandma, please don’t rebuild auth from scratch, stop stop stop.”

And so, every time:

Explain the feature again.

Re-mention the constraints. What we already agreed on.

And if you have a few agents running in parallel? Pffff, grab some popcorn because it’s a party. One agent makes progress in one session, another agent makes progress in another session, and suddenly you don’t just have two different changes to the code. You have two different versions of reality. Now tell me in the comments, “hellooo dude, that’s what an orchestrator is for, that’s what agents.md, skills, rules, plugins, and a dispatcher agent are for.”

And it’s true. Partially.

Orchestration can manage a workflow.

It doesn’t necessarily synchronize understanding.

The lead agent doesn’t make all the sub-agents see the same reality. It mostly delegates work. Each sub-agent still lives in its own bubble, with a different slice of context, different memory, and decisions that don’t make it back to the shared space in time.

Meaning: you didn’t solve the context problem.

You added a management layer to it.

A real workspace (which doesn’t exist today) should do something else: learn alongside the developer in real time, and hold onto the understanding that gets built along the way, so that even if the window switched, the agent switched, the branch switched, or god forbid the developer switched, the work continues from the same point of understanding.

Some people call this Context Anchoring: the realization that decisions, reasons, constraints, and current state can’t live only inside the conversation window. Because that’s the least stable place in the system. They need to live somewhere new sessions, humans, and other agents can pick up from.

Everyone needs to draw from the same understanding.

And move toward the same goal.

No matter which machine or process they’re running on.

And once we agree on that, the next step is obvious: this memory needs to stop being personal.

It needs to start being team-level. Group-level. Org-level. In short: distributed.

From Private Memory to Hive Mind

The moment the workspace starts remembering, not the individual agent but the workspace itself, it stops being a personal tool and becomes a team asset.

Imagine your agent has access to the collective brain of the strongest people on the team. How they think, how they decompose problems, what solutions they’ve already tried, and what they’ve ruled out. You tag @adirbrain and you get all of my understanding of the microservice. “Almost full cognitive sync” (a reference, if you know you know).

Your agent is touching an area I worked on recently? It tags my brain and sees the decisions I made, why I made them, and where it’s okay to compromise. Now your agent has my experience, not my code, my experience. Which means you know what I know. And I know what you know.

And that’s worth gold in the moments when knowledge disappears.

We all know what happens when a senior dev leaves a team. It’s usually a blow, because a big chunk of the unwritten context leaves with them. So out of pure fear you tell them: “forget the sprint, sit down, write a whole document for two months, maybe we’ll need it someday.”

But if the thinking layer is preserved, that knowledge doesn’t disappear. It stays, and it’s available to everyone, from now until the blessed exit, may its name be sanctified.

And once we agree on that, and we stop thinking about agent memory as a personal archive and start thinking about it as an active collective brain, you reach an unavoidable conclusion: this hive mind can’t stay the property of the developers. It has to absorb every discipline in the company.

All for One, One for All

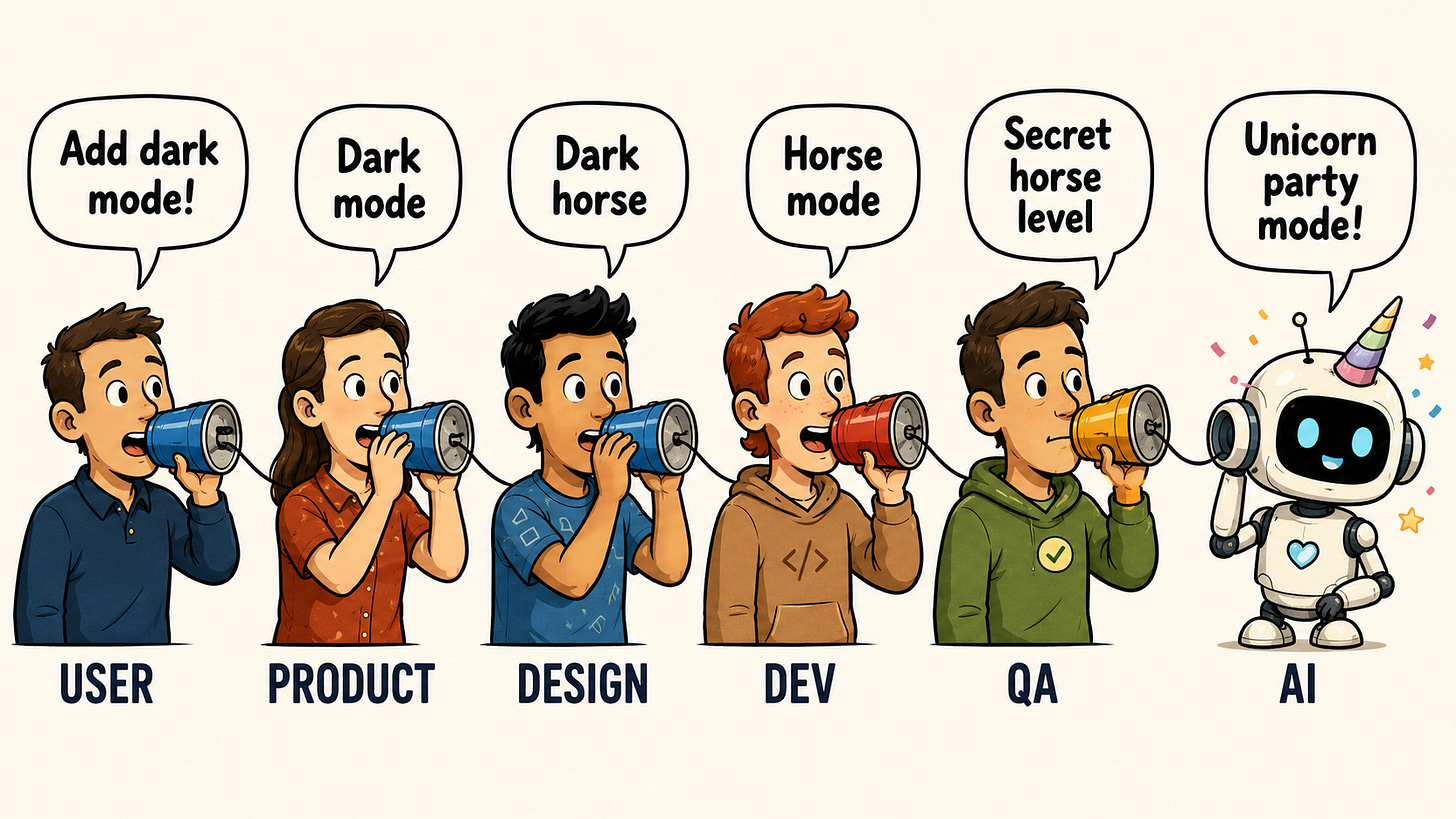

A feature always felt to me like a game of telephone.

The intent starts way before the developer. It’s born somewhere with the users, gets distilled through product, takes shape in design, moves to engineering, gets tested by QA, and at every handoff something drops. A nuance. A reason. A constraint.

That’s the problem with intent: it doesn’t travel well across handoffs.

You can summarize it, document it, open a ticket, write acceptance criteria, do a kickoff, record a Loom, do sync meetings.

And still, at every. 👏 handoff. 👏 the. 👏 intent. 👏 loses. 👏 resolution.

So what’s the fix? Write another doc between the stations? Nooooo (please no, I’m begging). The fix is to reduce the number of stations. Keep the intent as close as possible to where decisions get made and executed.

I think Figma is a good analogy, but correct me if I’m wrong.

Before Figma, design was a lot of “my file,” “the latest version,” “send me a copy,” “not that one, the other one, the updated one, final-final-for-real.” Then Figma turned the document itself into a living, shared space. The work, the decisions, and the current state all live in the same place.

The same shift needs to happen around development with agents.

Product sharpens the problem with an agent, and the decisions get saved as part of the feature’s context. A developer comes in later, and their agent already understands who we’re building for, what matters, where it makes sense to compromise, and where it’s a hard line. Design and QA come into the same space and see the same picture: what the intent was, what got built, and what needs to be tested.

In that setup, the agent stops being just a code generator.

It becomes the connective tissue between everyone.

Now wait wait wait wait.

Who knowsssss

what happenssss

when everyone starts working in the same place??????

Right!!! Conflicts!! Yayyy.

We already know this one. When two developers touch the same file, git stops us and says: hold up, reality has split. Resolve it among yourselves and come back to me.

But when product, design, support, and leadership are holding different versions of the intent? Nothing stops them. And that’s much more dangerous than a merge conflict. Because a code conflict you can see. An intent conflict only reveals itself after you’ve built the wrong thing.

So the next layer that needs to enter the development workspace is product itself. And then design. And then support. And then... hmm... actually, every critical knowledge layer should get what code already got a long time ago: ownership, versioning, history, search, and a structured way to update from inside the work.

And unfortunately (and fortunately), none of this can rest on context-window tech alone.

A Model Per Company? 😯

A general code model knows a lot about software.

It knows languages, libraries, patterns, common bugs, common fixes. It can write TypeScript, Python, Java, SQL, and sometimes even explain why it “chose a simple, scalable approach” while creating one more abstraction nobody asked for. Whatever.

But your org doesn’t operate inside “software in general.”

It operates inside a specific product.

With specific users.

Specific tech debt.

And a ton of history that doesn’t exist in any public repo.

If you have a complex permissions system, it’s not enough that the model knows how permissions are built. It needs to understand how you build permissions. If there’s an area of the code that looks crooked but holds critical behavior for a big customer, a general model isn’t going to figure that out on its own. From its perspective, it’s a bad practice. From yours, it’s a postmortem.

The general model brings broad knowledge from the world.

You have broad knowledge of the org.

That’s a huge difference.

Today most agents still behave like global systems. They get better when the vendor releases a new model, when the harness changes, or when someone adds another instruction file.

But in the org, nobody cares how the model performs on which SWE-bench. We care how much more accurate it is for my developers, on my code, in my product, in my repo.

And if you think this is far off, Cursor is already describing exactly this direction with their Composer model.

Their argument is that public benchmarks aren’t close enough to how developers actually work with agents. So first, they trained the model to operate in a real agent environment against large codebases. Then they built CursorBench, an internal evaluation built from real sessions of their engineering team.

And then in the post on real-time reinforcement learning they close another piece of the loop: Composer is already running in production, users are working with it, and their reactions feed back into the system as training signals. The system trains a new model version, validates it, and pushes it back out to production at a pace of up to several times a day.

The most interesting part comes at the end: they say explicitly that they’re exploring how to adapt Composer to specific organizations or specific kinds of work, in places where the coding patterns differ from the general distribution. Meaning, a model that improves based on a specific user population, specific practices, and maybe in the future a specific organization.

Today Cursor learns from usage of Cursor. Tomorrow orgs will want to learn from their repo, their transcripts, their local decisions, and the way the work actually gets done at their place.

So.

If we agreed that context needs to be anchored, that memory needs to be team-level, that intent needs to be shared, and that the next model won’t just know code but will learn the org itself, then there’s one question left: in what shape does the org need to make itself available to the agent?

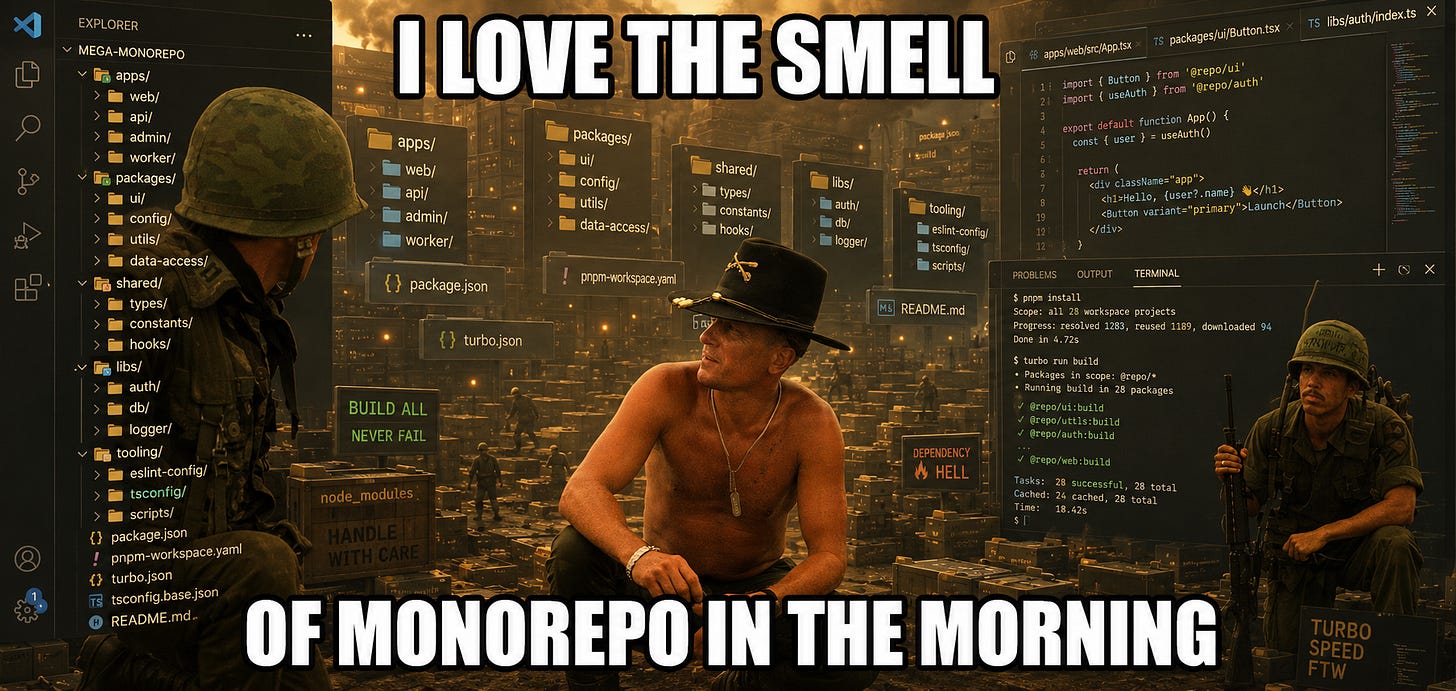

Monorepo FTW

The monorepo originally solved a technical problem. Instead of every service, library, and tool living on its own island, you bring them into the same workspace. Easier to see dependencies between parts, make cross-cutting changes, keep versions in sync, and understand how one system touches another.

In the world of agents, this idea gets several times stronger.

Imagine the org as a dataset. Every decision, every transcript, every design, every bug, every compromise, every customer pain that came up five times in support. All of these are the learning material the agent is supposed to use to understand how this org thinks. A bug, for instance, isn’t just incorrect behavior in code. A bug is an excellent question about priorities. There are orgs where it’s a thank-you-and-goodbye. There are orgs where the same bug, specifically for that customer, is all hands on deck.

And most orgs don’t look like a clean dataset.

They look like a crime scene.

A bit of truth in Jira.

A bit in Figma.

A bit in Slack.

A bit in email.

A bit in the repo.

It’s terrible, but for humans it somehow works, because humans fill in gaps through gossip, memory, politics, knowing the people, scrolling in the bathroom, and the wonderful ability to understand that when Dennis writes “we’ll check on this later” he actually means “over my dead body.”

An agent doesn’t know that. Dennisssss.

Most knowledge in the org lives like a stray cat. Everyone knows it, everyone has seen it once, nobody quite knows whose it is, where it sleeps, or whether it’s still alive.

This doesn’t work when agents need to act on this cat.

Because an agent doesn’t just need “access to information.” Access to information is the primitive stage. I have access to the entire Drive too, that doesn’t mean I understand what the hell is going on in there.

An agent needs to know what type of knowledge it is, who owns it, when it changed, what it’s connected to, and what it’s supposed to affect.

In other words: the knowledge needs to go from “something lying somewhere” to “a unit in the system.”

This is where the monorepo concept can come in.

If we just thought about org knowledge as packages:

Product package.

Design package.

QA package.

Leadership package.

Support package.

Infra package.

Each one holds the memory of its discipline: what we learned, what decisions were made, what’s not allowed to break, where it’s okay to compromise, and which patterns keep repeating.

Call it “company-brain” or “mono-organization” or whatever.

What’s certain is that just like code shouldn’t live only in one developer’s head, product intent, design rationale, BI insights, support pain, and leadership decisions shouldn’t live in separate systems, side documents, and conversations that people remember only because they were there.

They need to be part of the same workspace people and agents operate from.

Product sharpened a problem? Product memory updates.

Design changed direction? The rationale is preserved.

QA spotted a recurring pattern? Goes into testing memory.

Support sees the same pain again and again? It stops being an anecdote and starts being a signal.

And if this sounds too grand, keep reading.

Shut Up and Take My Money Tokens

First, put the credit card back in your pocket.

There isn’t yet one product that solves all this. There isn’t a clear standard. And even if someone is promising you a “context-aware AI workspace for the enterprise,” breathe into a paper bag for a sec.

This problem sits across too many layers: code, transcripts, decisions, organizational knowledge, agent memory, and sync between people.

So before running to pick a tool, you need to understand something:

Where is your context leaking?

Intent Layer

If your problem is that intent breaks down before or while the agent touches code, this is the relevant family.

OpenSpec, GitHub Spec Kit, Kiro, BMAD, Agent OS, Taskmaster AI, and Tessl sit in this area. They try to turn an idea, requirement, or vibe into a defined spec the agent can work against.

Agent Trace

If your problem is that you don’t know how or why the final diff was born, this is the relevant family. It tries to preserve the path to it: prompts, transcripts, tool calls, files touched, decisions made along the way, and which model did what. Cursor themselves released a spec on this called Agent Trace.

Entire, Tapes, and Git AI sit here. Entire treats an agent session as a record that should sit next to the source code. Tapes generates a layer of local understanding. Git AI takes it down to the line level: which code was generated by which agent, from which model, and in what context.

Not the most comfortable to work with. But thank god there’s a business model.

Codebase Context

If your problem is that the agent walks into the codebase like a tourist with too much confidence, this is the relevant family.

Augment, Pharaoh, Unblocked, and Tabnine Context Engine sit on this axis.

The goal here is to let the agent understand the system before it touches it: graphs, architecture, dependencies, patterns, ownership, and what usually breaks when you touch what.

Organizational Context

If your problem is that the org’s real knowledge is scattered across a bunch of separate systems, this is the relevant family.

Graphlit, Glean, Contextual AI, and kapa.ai sit here. They try to connect documents, meetings, Slack, email, code, documentation, tickets, people, permissions, and the relationships between things, to build the agent a picture of the organizational world.

This is the family that bridges between the model’s general knowledge and the org’s specific knowledge.

Agent Memory

This one is more for developers. If your pain is that every session starts from scratch, this is the most direct family.

Zep and Graphiti, mem0, Letta / MemGPT, Supermemory, Total Recall, Cognee, Memoria, Chroma, Hindsight, and Mastra Observational Memory.

Some build graph memory out of conversations, chunk into episodes, compress sessions, and more.

To wrap up, can I prophesize for a sec?

All of this looks today like a market of separate tools, but in my view it’s just how a new category cooks before it has a name.

At first it looks like a lot of problems: the agent doesn’t remember, the codebase isn’t understood, the transcript gets thrown out, the knowledge is scattered, the intent breaks along the way. It only looks like an agent problem, but it’s not. The chat is just the clearest microcosm of it.

All of these are symptoms of the same problem:

The org can’t continue from itself.

Every time it understands something, it loses part of the understanding along the way. It makes a decision, then leaves it somewhere nobody will find. It teaches an agent, then opens a new session and explains again.

This is operational Alzheimer’s at the org level.

Or, in developer language: the org is producing events but not updating state.

A meeting is an event. A session with an agent is an event. A PR comment is an event. A call with a customer is an event. A design decision is an event.

A lot happens. A ton, absolutely. But the org’s memory state barely changes. So it pays again and again for context recovery, because it never became part of the system.

The moment this gets solved and integrated into a workspace with AI, an organizational nervous system is born.

A layer that holds the understanding being created during work and feeds it back into the next piece of work, in real time. Every interaction would leave behind a small improvement in the starting point for the next session: what the org already understood, what it already tried, where it already failed, and what it already agreed on.

This is the move from silos to hive mind.

So I have two takeaways. One for managers and one for developers.

For Managers: Squeeze the Lemon

Pay attention to what you’re measuring. There’s a difference between an org that accelerates and an org that accumulates.

Acceleration says you did more work in less time.

Accumulation says that work changed the starting point for next time.

Agents will give everyone acceleration. There’s no big long-term advantage there. Everyone will get more code, more PRs, more experiments, more motion.

The advantage goes to whoever turns that motion into accumulating understanding: working memory, organizational context, and better judgment in the next round.

This is also the line between an expense and an asset. If at the end of the session only the artifact remains, you paid for work and you got work. If understanding remains too, you paid for work and you got an asset that keeps returning value even after the feature ships.

Without it, the org is squeezing the lemon for a single glass of juice, then throwing out the peel, the seeds, and the tree that could have grown from them.

For Developers: You Are the Gap AI Is Trying to Close

We tend to think model companies are competing mostly with each other. OpenAI vs. Anthropic vs. Google. And that’s true, but it’s only half the story. They’re competing mostly with you, or more precisely: with everything you still do better than the model.

Think of it like parity against a competitor. If a competitor has an important feature you don’t, you want to close the gap. Same here. Every quality gap you produce above the agent, whether it’s judgment today or architecture tomorrow or whatever, will always be a gap the companies want to close. Because as long as that advantage stays with you, value stays outside the model.

On the other hand, every competitive edge you do manage to produce above the agent will also stay as a trace the agent can learn from. Which means more and more pieces of your experience won’t stay only with you. They’ll start living inside the models and inside the org: in transcripts, memories, rules, skills, and agents that learn to continue from a higher point.

So you’re not just competing with the general agent. You’re also competing with the previous version of yourself inside it, the one already absorbed by the system.

This is maybe the biggest challenge for developers in this generation: not to prove you have value. Obviously you do. The challenge is to keep being the source of the next value, after your previous value has already been absorbed into the system.

— Adir